- February 16, 2022

- Noam Chai

- 0

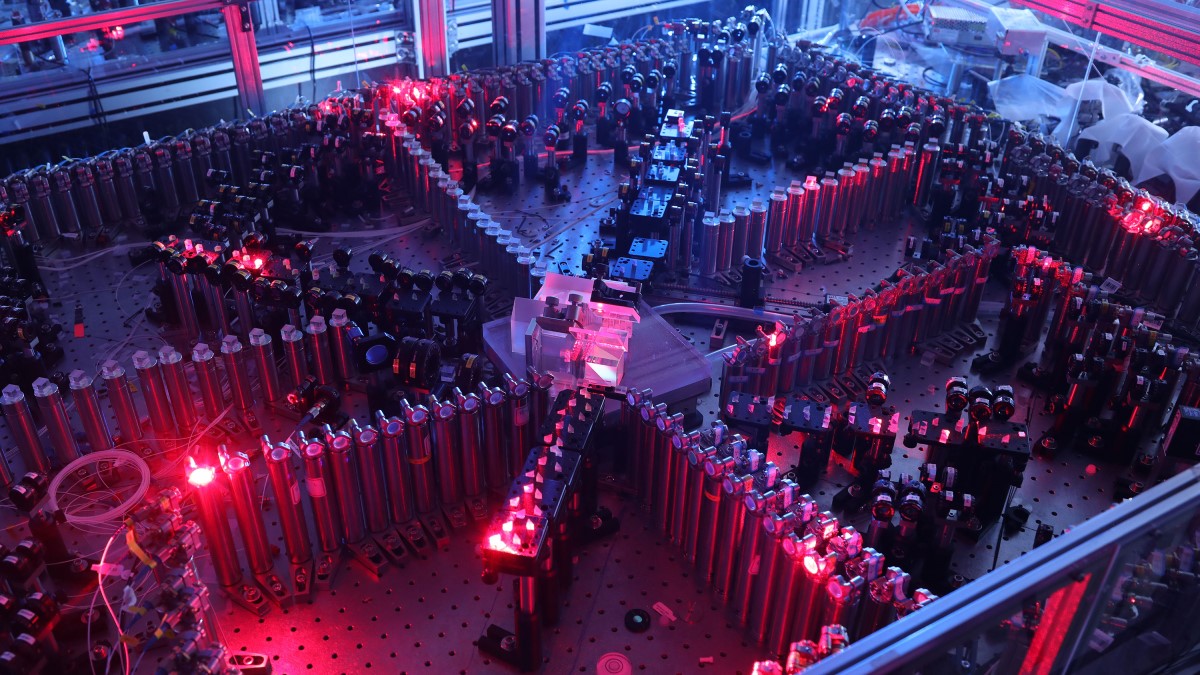

Boson sampling is one of the few techniques that have demonstrated quantum supremacy over classical computers and is the only one yet that was performed at room temperature. Boson sampling was popularized in recent years due to the number of successful experiments with photons. Light-based instruments use single-photon sources generated by PPKTP crystals with high non-linear coefficients to fully utilize the quantum properties of light.

Here is a thought experiment for you – would you rather choose a pen and paper to perform basic calculus or an abacus? You would probably choose a pen and paper; after all, moving beans takes time, right? Now, what if I told you that the number of steps is much smaller with an abacus? In his book “Surely You’re Joking Mr. Feynman!”, the renowned physicist Richard Feynman shared with the readers that he was challenged by a Japanese abacus expert in a speed contest. To Feynman’s surprise, the abacus was much faster in addition and multiplication, however it was not faster in division and cube roots. If we estimate the number of steps is needed to compute addition, the abacus can also beat the modern computer! But sure, the number of steps is not the only thing that dictates computational speed; it’s the electronic design that allows computers to perform computation faster than any analog device.

On some occasions, when the number of steps is extremely large, even supercomputers will take forever to perform. In that case, computation speed relies heavily on algorithm efficiency. There are some problems scientists are eager to crack but know that today’s technology cannot solve in a reasonable amount of time. Molecule interactions, Climate change, and the Stock market are just a few examples experts wish to simulate but do not have the extreme computational power or an efficient algorithm to make it happen. In the last century, Richard Feynman addressed this issue and suggested that a computer based on quantum principles is required if we wish to simulate nature. In recent years, the quantum hype triggered major tech companies to develop their version of quantum computers, and the race has just begun.

What is Boson Sampling?

Their first goal was to confirm quantum supremacy over classical computers. It is very tricky to verify that we have crossed supremacy, especially since it depends on the problem we wish to solve, but on the other hand, we have some promising innovations in line. A decade ago, “boson sampling” was strongly suggested to demonstrate quantum supremacy because it was proven mathematically to be extremely complex. The problem deals with N bosons (photons, for instance) traveling in a circuit of M modes (like beam splitters). At the end of this circuit, we ask the probability of finding the N bosons in a certain configuration. This problem is very similar to Galton board – balls fall off from a narrow hall and encounter a series of obstacles before they reach the ground. The result of this experiment is a Gaussian distribution which appears after a substantial amount of balls have fallen. What would happen if we engineer a similar device in the quantum world? We get the boson sampling problem by replacing photons with balls and beam splitters with obstacles.

Why is Boson Sampling considered to be extremely difficult to predict?

As we mentioned before, it has been proven that this problem is classified as a #P problem, more complicated than NP problems, making it almost impossible to perform with classical computers. Some scientists believe it is the first example that breaks the extended Church-Turing thesis, which states that any realizable physical system can be efficiently simulated with a Turing machine. The fact that boson sampling cannot be reduced to a polynomial complexity means that it cannot be simulated efficiently. Boson sampling is analytically challenging mainly because it requires a probability computation of all possible configurations. Each probability is proportional to the permanent (similar to the determinant but with a plus sign everywhere) squared of a unitary matrix representing the scattering process. Increasing the number of bosons or the number of scatterers increases the complexity of the process exponentially. For that reason, classical computers cannot predict the probability of a certain configuration to appear. If we are truly interested in finding an answer for that problem, we need to count beans! in other words, we let nature tell us the answer by designing an experiment that does precisely that.

Experimental progress

The most obvious way to achieve boson sampling is with light, but a single photon source is needed. There are many ways to create single photons, while the most widely used technique is “Parametric Down Conversion” (PDC). This technique is based on a non-linear crystal that converts a high-energy photon into two low-energy photons according to energy and momentum conservation laws. The main advantages of PDC sources are the high photon indistinguishability, collection efficiency, and relatively simple experimental setups. In Raicol, we offer one of the best quantum light sources generated by numerous non-linear crystals, primarily PPKTP crystals that are used to simulate boson sampling worldwide. Our in-house manufacturing process allows us to create the most efficient crystals with the highest non-linear coefficient upon request. Over the years, physicists have shown that boson sampling theory matches experiments involving a small number of photons, but it was difficult to expand this verification with a large number of particles. The breakthrough came in late 2020 when researchers from USTC demonstrated boson sampling with 50 photons and 100 modes for the first time. The Chinese group had to overcome major challenges, especially the probabilistic nature of PDC. The probability of generating 50 indistinguishable photons is proportional to generating a single photon to the power of 50. Since this probability is minuscule, the amount of time of generating 50 indistinguishable photons is enormously large. Instead, they designed the experiment with coherent squeezed states which are set deterministically. The approach is called Gaussian boson sampling (GBS) and has recently emerged as a new paradigm that not only can provide a highly efficient large-scale implementation but also offer potential applications in graph-based problems and quantum chemistry.

Criticism and Future prospects

Although configuring this optical system was a historic milestone in quantum computing, not all were convinced. First of all, it is impossible to check the validity of this experiment since we cannot simulate a similar process in a classical computer; Secondly, this is not a full quantum computer. Even though it is evidence of quantum supremacy, it was built specifically to compute a single task; Lastly, it is not clear which applications can utilize and benefit from this scheme. However, since it is a robust system capable of performing quantum computation at room temperature and based on photons that can easily be secured with quantum encryption methods, it holds huge promise in near-future technologies.

Raicol

Rosh Ha’Ayin 4809162, Israel